Verticle_lines_img = cv2.dilate(img_temp1, verticle_kernel, iterations=3) Img_temp1 = cv2.erode(img_bin, verticle_kernel, iterations=3) # Morphological operation to detect vertical lines from an image Kernel = cv2.getStructuringElement(cv2.MORPH_RECT, (3, 3)) Hori_kernel = cv2.getStructuringElement(cv2.MORPH_RECT, (kernel_length, 1))# A kernel of (3 X 3) ones. Verticle_kernel = cv2.getStructuringElement(cv2.MORPH_RECT, (1, kernel_length))# A horizontal kernel of (kernel_length X 1), which will help to detect all the horizontal line from the image. # A verticle kernel of (1 X kernel_length), which will detect all the verticle lines from the image. (thresh, img_bin) = cv2.threshold(img, 250, 255,cv2.THRESH_BINARY|cv2.THRESH_OTSU) #fn to show np images with cv2 and close on any key press

Convert ocr pdf to excel tabl code#

Note: Most of this code is from a medium blog by Kanan Vyas here: #most of this code is take from blog by Kanan Vyas here: Nanthancy's answer is also accurate, I used the following script for getting each box and sorting it by columns and rows. # Repair table lines, sort contours, and extract ROIĬlose = 255 - cv2.morphologyEx(opening, cv2.MORPH_CLOSE, kernel, iterations=1)Ĭnts = cv2.findContours(close, cv2.RETR_TREE, cv2.CHAIN_APPROX_SIMPLE) Opening = cv2.morphologyEx(thresh, cv2.MORPH_OPEN, kernel, iterations=1)Ĭnts = cv2.findContours(opening, cv2.RETR_TREE, cv2.CHAIN_APPROX_SIMPLE)Ĭnts = cnts if len(cnts) = 2 else cntsĬv2.drawContours(opening,, -1, (0,0,0), -1) Kernel = cv2.getStructuringElement(cv2.MORPH_RECT, (3,3)) # Remove text characters with morph open and contour filtering Thresh = cv2.threshold(gray, 0, 255, cv2.THRESH_BINARY_INV + cv2.THRESH_OTSU) Gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) # Load image, grayscale, Otsu's threshold Here's a visualization of each box field and the extracted ROI Next we find contours and filter using contour area then extract each ROI. From here we sort the box field contours using imutils.sort_contours() with the top-to-bottom parameter.

We morph close to fix and broken lines and smooth the table. Repair horizontal/vertical lines and extract each ROI. This will effectively make the text into tiny noise so we find contours and filter using contour area to remove them.

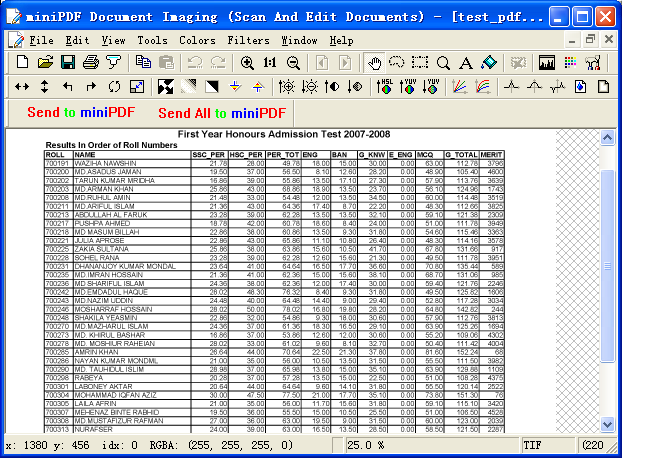

We create a rectangular kernel and perform opening to only keep the horizontal/vertical lines. Load image, convert to grayscale, and Otsu's threshold. Here's a continuation of your approach with slight modifications. #Now we have badly detected boxes image as shown If(th3.shape=.7*medianheight and w/h > 0.9): # initialize kernels for table boundaries detections I don't know which part I'm doing wrong but if there's anything I should try or maybe change/add in my question please please tell me. I cannot get clearly separated boxes using real lines, I've tried this on an image that was edited in paint(as shown below) to add digits and it works. I cannot detect text only to perform OCR and proper bounding boxes aren't being generated like below: I am trying to extract each box separately and perform OCR but when I try to detect horizontal and vertical lines and then detect boxes it's returning the following image:Īnd when I try to perform other transformations to detect text (erode and dilate) some remains of lines are still coming along with text like below: I have scanned images which have tables as shown in this image: